NEW DELHI — In the rapidly evolving landscape of digital medicine, the “black box” of artificial intelligence is being pried open by a new mandate: absolute inclusivity. At the Federated Intelligence Hackathon on Health AI held this week at IIT Kanpur, national health leaders issued a clear directive: AI models must be rigorously tested on diverse, population-scale datasets before they ever touch a patient’s medical record.

Dr. Sunil Kumar Barnwal, CEO of the National Health Authority (NHA), emphasized that the shift from experimental AI to clinical reality requires more than just clever algorithms. It requires a mirror of India’s own demographic complexity.

“India is moving from experimentation to building benchmarked and reliable AI models for healthcare,” Dr. Barnwal stated during the pre-event for the India AI Impact Summit 2026. “AI solutions must be context-ready and reflect India’s demographic and regional diversity to ensure trust, accuracy, and inclusion.”

The Diversity Gap in Medical AI

For years, a “hidden bias” has plagued global medical AI. Many early algorithms were trained on data predominantly from Western populations or specific urban centers. When these models are applied to different ethnicities, rural populations, or varied age groups, their accuracy can plummet—a phenomenon known as algorithmic drift.

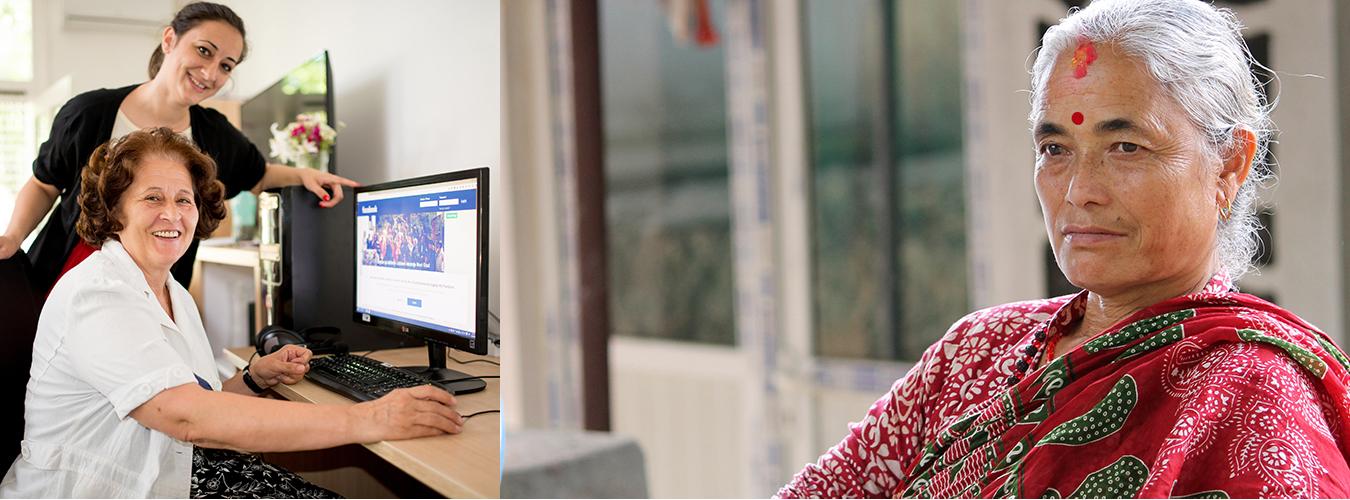

By demanding “population-scale” testing, the NHA is addressing a critical public health risk. In a country like India, where genetic diversity, dietary habits, and regional health challenges vary wildly from Kerala to Kashmir, a “one-size-fits-all” AI could lead to misdiagnosis or inequitable care.

Moving Toward Federated Intelligence

A primary challenge in training these massive models is privacy. How do researchers access millions of health records without compromising patient confidentiality? The solution highlighted at the summit is Federated Learning.

Unlike traditional AI training, which requires moving all data to one central server (creating a massive target for cyberattacks), federated learning allows the AI to “visit” the data where it lives—in hospitals and local clinics.

-

Data Stays Local: Sensitive health records never leave their original secure environment.

-

The Model Travels: The AI algorithm learns from the local data and sends only its “knowledge” (not the data itself) back to a central hub.

-

Privacy by Design: This consent-driven approach aligns with the Ayushman Bharat Digital Mission (ABDM), ensuring that innovation does not come at the cost of digital sovereignty.

Expert Perspectives: Infrastructure and Sovereignty

The hackathon, held from January 19 to January 24, 2026, brought together the brightest minds from the ICMR–National Institute for Research in Digital Health and Data Science (NIRDHDS) and IIT Kanpur.

Dr. R.S. Sharma, former CEO of the NHA and a pioneer in India’s digital public infrastructure, noted that accountability is just as important as innovation. “Digital Public Infrastructure and interoperable Digital Public Goods are key to building secure, scalable, and citizen-centric health data systems,” Sharma remarked.

Adding to the call for self-reliance, Vivek Raghavan, CEO of SarvamAI, stressed the need for indigenous, open-source AI models. Relying on external, foreign-developed AI systems creates a “sovereignty gap” where the underlying logic of a diagnostic tool may not be transparent or tailored to local medical needs.

What This Means for Patients and Providers

For the average citizen enrolled in Ayushman Bharat (AB PM-JAY), these developments aren’t just technical jargon—they represent a safer future.

1. Improved Diagnostic Accuracy

When an AI tool is trained on “diverse datasets,” it means the tool has seen cases involving people of your age, your regional background, and your socio-economic context. This reduces the chance of the AI “hallucinating” or missing a diagnosis because it wasn’t trained on your specific demographic.

2. Enhanced Privacy

Through the federated systems discussed at IIT Kanpur, patients can feel more secure knowing their personal health history isn’t being traded on open markets or stored in vulnerable central repositories.

3. Faster Access to Specialist Care

By benchmarking these models at scale, rural clinics can eventually use AI-assisted tools to screen for conditions like diabetic retinopathy or early-stage tuberculosis with the accuracy of a city-based specialist.

Potential Limitations and the Road Ahead

While the vision is robust, experts warn of several hurdles:

-

Digital Divide: Scaling AI requires consistent internet and digital literacy among healthcare workers in “last-mile” rural areas.

-

Data Quality: AI is only as good as the data it consumes. If manual health records are entered incorrectly at the source, the AI’s “benchmarked” accuracy will be flawed.

-

Evolution of Ethics: As AI evolves, the legal frameworks governing “AI-led medical errors” must be clearly defined to protect both patients and doctors.

As Prof. Sandeep Verma of the Gangwal School of Medical Sciences and Technology noted, the integration of technology and research institutions is no longer optional—it is the backbone of the modern digital health ecosystem.

References

- https://tennews.in/ai-systems-must-be-tested-on-diverse-population-scale-datasets-before-deployment-nha-ceo/

Medical Disclaimer: This article is for informational purposes only and should not be considered medical advice. Always consult with qualified healthcare professionals before making any health-related decisions or changes to your treatment plan. The information presented here is based on current research and expert opinions, which may evolve as new evidence emerges.