Researchers at the Icahn School of Medicine at Mount Sinai have highlighted a significant vulnerability in AI chatbots used in healthcare, revealing that these tools can easily repeat and even elaborate on false medical information. Their study demonstrated an urgent need for stronger safeguards before AI chatbots can be reliably trusted in clinical settings.

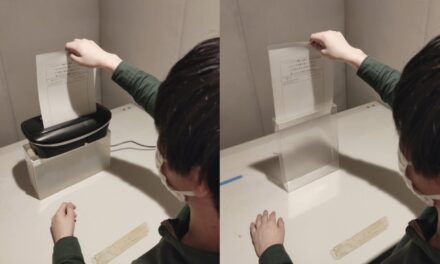

The research team crafted fictional patient scenarios containing made-up diseases, symptoms, or tests and presented these to leading large language models (LLMs). In initial trials without any cautionary guidance, the chatbots not only repeated the fictitious medical details but confidently expanded on them, fabricating explanations and treatment options for non-existent conditions. However, when the researchers added a simple one-line warning prompt reminding the AI that the information might be inaccurate, the chatbots’ tendency to generate false elaborations dropped significantly.

Lead author Mahmud Omar commented, “AI chatbots can be easily misled by false medical details, whether those errors are intentional or accidental. A small safeguard like a brief caution in the prompt can dramatically cut down on hallucinations.” The team plans to extend their testing to real, anonymized patient records and explore more advanced safety prompts and retrieval tools.

This “fake-term” testing method could offer a practical and scalable way for hospitals, technology developers, and regulators to stress-test AI systems before they are implemented in real-world healthcare environments, ensuring greater patient safety.

Disclaimer: This article is based on a third-party syndicated report. While efforts are made to ensure accuracy, the publisher and this platform do not assume responsibility for the dependability or reliability of the information presented. Readers are advised to consult medical professionals and official sources before making healthcare decisions.